Fair and Relevant Credit Decisions: Building Credit Systems That Reduce Exclusion

Artificial intelligence is transforming how lenders assess creditworthiness through AI-driven credit decisioning – enabling faster decisions, more personalized pricing, and fair access to credit for people historically excluded from traditional systems. Ethical AI in financial services promises to widen opportunity, but speed and precision alone don’t guarantee fairness. Without deliberate choices about which data matters, how bias is tested, and where human oversight in AI systems remains essential, AI credit models risk hard-coding inequality into automated decisions.

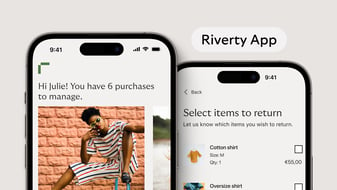

At Riverty, we believe better technology should reduce unfair exclusion, especially for thin-file consumers who lack traditional credit histories. This means building responsible AI in credit scoring where fairness is engineered, not assumed, and where data is used with consent, not through surveillance.

Why Precision Doesn’t Equal Fairness

A model can be statistically accurate and still be ethically wrong. Consider a lending algorithm that uses purchasing behavior as a risk signal. The model might learn that buying certain brands correlates with higher default rates in training data, but correlation isn’t causation; the purchase doesn’t cause someone to miss payments. It’s a proxy variable that stands in for other factors the model can’t directly observe.

When fair credit decisions rely on proxy variables in credit models, they create opaque pathways to discrimination. Someone might receive worse loan terms not because of actual creditworthiness, but because their purchase pattern resembles other groups the model flagged as higher risk. The decision feels arbitrary because it is – optimized for prediction, not for relevance or fairness.

What matters is whether relevant signals in credit assessment are causally relevant to repayment ability, whether bias in credit scoring has been tested across the full credit lifecycle, and whether outcomes can be explained and contested.

Where Bias Enters AI Credit Systems

Discrimination in AI-driven credit decisioning doesn’t usually come from malicious intent. It emerges from technical choices, data gaps, and organizational blind spots.

Historical bias is persistent. Credit datasets learn from past decisions, but if those decisions reflected unequal access or exclusionary practices, the model encodes that history as natural risk. Groups historically excluded from credit are structurally disadvantaged because absence of data gets interpreted as absence of creditworthiness.

Sampling bias creates another layer of risk. Digital-first underwriting can under-represent people with limited connectivity, unstable housing, or low digital literacy. When the training population doesn’t reflect the target population, models underperform on groups less visible in the data.

Measurement bias complicates things further. Even when the same variable exists across groups – income, employment stability, expenses – it can be measured inconsistently. Informal work or cash-based livelihoods mean that reliable signals for one group become noise for another.

Feedback loops make these problems self-reinforcing. When a system offers worse terms to a group, it may increase delinquency rates in that group – not because the original risk assessment was accurate, but because the terms themselves created financial stress. The model then “learns” its original pattern was correct, entrenching disadvantage.

Objective misalignment creates structural problems. If the system optimizes purely for profitability – targeting high-margin customers, steering people toward expensive products, exploiting behavioral vulnerabilities – it can be privately rational while being socially harmful.

Fair Access Through Responsible Intelligence

The question isn’t whether AI should be used in credit, it’s how to engineer AI fairness in lending into the system rather than assume it emerges naturally. This starts with explicit limits on proxy variables. Not every signal that improves predictive performance belongs in a credit model. Social network data, purchasing brand preferences, or behavioral patterns that correlate with protected characteristics should be excluded; not because they don’t predict risk, but because they create pathways to discrimination borrowers can’t understand or challenge.

Bias testing needs to happen across the full credit lifecycle, not just at approval. This means monitoring for disparate impact during onboarding, pricing, limit-setting, and collections. It means separating predictive performance from ethical acceptability – a model that performs well on historical data may still fail fairness criteria if it perpetuates exclusion.

Human oversight in AI systems remains essential. Automated systems should augment credit professionals, not replace them. When edge cases arise, when data quality is uncertain, or when automated decisions would produce unjust outcomes, human judgment needs to be part of the process.

Data sovereignty and consent create the foundation for responsible use of data in finance. Rather than extracting signals through surveillance, ethical AI in financial services should enable borrowers to actively choose which verified data to share for specific lending decisions. This transforms data from a tool for profiling into a negotiable resource that borrowers control, making underwriting a collaboration, not an extraction.

Ongoing monitoring prevents feedback loops from entrenching disadvantage. Credit systems should detect when outcomes diverge across groups, when model performance degrades for underrepresented populations, or when pricing strategies amplify rather than reduce exclusion.

At Riverty, we’re embedding these principles into our credit scoring approach – treating fairness as infrastructure that must be designed, tested, and governed, not assumed to emerge naturally from better data alone.

Why This Matters for Sustainable Inclusion

Hyperindividualized credit has genuine potential to expand access for people poorly served by traditional bureau-centric scoring. Cash-flow-based underwriting, verified transaction data, and alternative signals can help people demonstrate reliability in ways that rigid, backward-looking credit histories cannot.

But that potential for inclusive credit scoring only becomes real when fairness is engineered from the start, not retrofitted after harm occurs. This means making deliberate choices about data sources, testing rigorously for bias, maintaining clear separation between prediction and acceptability, and building recourse mechanisms that allow people to challenge explainable credit decisions they don’t understand.

Riverty’s approach is grounded in this principle: better data and better models should reduce unfair exclusion, not replace it with algorithmic opacity. “Precision” and “fairness” aren’t opposing goals – they’re both essential to building credit systems that work for borrowers, lenders, and society. And “profitable” and “responsible” aren’t contradictions – they’re prerequisites for sustainable performance.

Frequently Asked Questions

A fair decision relies on signals causally relevant to repayment ability rather than proxies that correlate with protected characteristics. The data must be accurate and verifiable, the decision explainable in plain language, and borrowers must have meaningful recourse when data is wrong or circumstances change. Statistical accuracy alone isn’t sufficient – decisions must be understandable, contestable, and free from discrimination based on factors unrelated to actual creditworthiness.

AI reduces bias when deliberately designed with fairness as a first-order requirement. This means testing models for disparate impact across demographics, excluding proxy variables that create discrimination pathways, and using richer data – like cash-flow patterns and verified transaction history – that reflects actual repayment capacity. It also requires ongoing monitoring to detect feedback loops, performance degradation for underrepresented populations, and situations where human oversight is needed for edge cases.

Proxy variables correlate with credit risk in historical data but have no causal relationship to repayment ability. Purchasing certain brands, living in specific postal codes, or using particular devices might correlate with higher default rates – not because these factors cause defaults, but because they're indirect signals of income or neighborhood characteristics. Proxies are risky because they create pathways to bias that borrowers can’t see, understand, or challenge, perpetuating exclusion while appearing statistically sound.

Statistical accuracy measures how well a model predicts outcomes in historical data, but doesn’t account for whether predictions are ethically acceptable or causally valid. A model can be highly accurate and still encode historical discrimination, rely on proxies that perpetuate exclusion, or optimize for profitability in ways that exploit vulnerable borrowers. Ethical AI in financial services requires not just predictive performance, but also fairness testing, explainability, and contestability.

Human oversight is essential for handling edge cases, uncertain data, and situations where automated decisions would produce arbitrary or unjust outcomes. While AI processes diverse data efficiently, it cannot account for context, qualitative information, or circumstances outside the model’s training data. Credit professionals can review borderline cases, investigate discrepancies, override automated decisions when warranted, and provide pathways for borrowers to contest outcomes, making systems more robust and trustworthy.